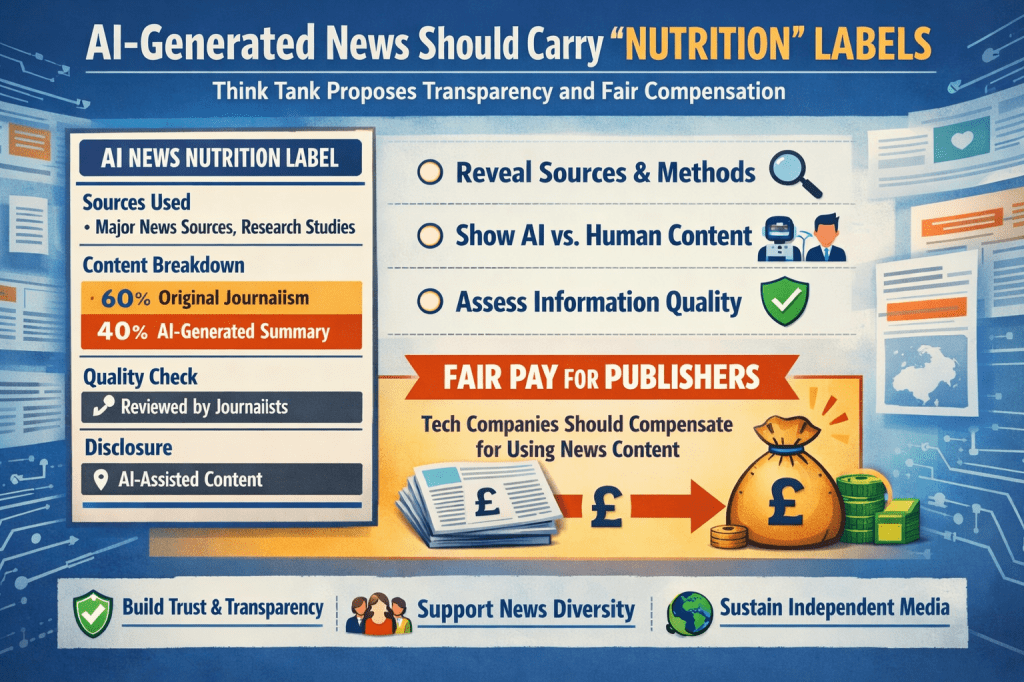

A UK policy institute has argued that news content generated or summarised by artificial intelligence should carry clear “nutrition”-style transparency labels, and that technology companies should compensate news publishers whose journalism is used by AI systems. The proposals are intended to strengthen public trust in information, protect media diversity, and support the long-term sustainability of professional journalism as generative AI becomes more widely used.

What Are “Nutrition” Labels for AI News?

The proposed labels are designed to work in a similar way to food nutrition labels: not to restrict consumption, but to inform users about what they are consuming.

Applied to AI-generated or AI-assisted news, these labels would indicate:

- Whether the content was fully generated by AI, written by journalists, or written by journalists and summarised by AI

- The types of sources used, such as professional news outlets, public reports, or other data

- The recency of the information

- Whether the material is based on original reporting or secondary sources

The aim is to give readers basic but essential context, allowing them to better judge accuracy, reliability, and potential bias.

As AI tools are increasingly used for news briefings, summaries, and search responses, transparency becomes more important, particularly when users may not realise that AI systems are shaping or filtering the information they receive.

Who Is Calling for This — and Why?

The proposals were set out by the Institute for Public Policy Research (IPPR), one of the UK’s leading policy thinktanks. The IPPR argues that AI companies and platforms are rapidly becoming powerful intermediaries in how news is accessed, similar to search engines and social media platforms in earlier years.

Key concerns highlighted include:

- AI tools are increasingly used as direct sources of news, rather than just productivity aids

- Decisions about which publishers are summarised or cited can shape public understanding

- Smaller, local, or public-interest news organisations risk being underrepresented or excluded

Without clear disclosure, readers may assume AI-generated summaries are neutral or comprehensive, even though they reflect design choices, training data, and commercial arrangements.

Why Use the “Nutrition Label” Concept?

The “nutrition label” analogy is intended to be familiar and practical. Just as food labels provide basic facts without prescribing behaviour, AI news labels would aim to:

- Improve clarity and accountability

- Support informed decision-making

- Increase confidence in legitimate journalism

- Encourage more responsible deployment of AI in news distribution

The focus is not on banning AI in journalism, but on making its role visible and understandable.

The Compensation Issue: Paying for Journalism

Alongside transparency, the IPPR argues that news publishers should be paid when their content is used to train AI systems or generate summaries.

The reasoning is straightforward:

- Modern AI systems rely heavily on large volumes of existing journalism

- Many publishers currently receive little or no payment for this use

- Continued unpaid use risks weakening the financial foundations of newsrooms, particularly local and investigative outlets

The thinktank supports the development of formal licensing or collective bargaining mechanisms, giving publishers a structured way to negotiate fair compensation rather than relying on voluntary agreements.

Broader Implications: Trust, Diversity, and Democracy

The issue extends beyond technology and into the health of public information systems.

Trust in Information

Clear labelling can help users distinguish between original reporting, AI summaries, and automated outputs, reducing confusion and mistrust.

Media Diversity

If AI systems consistently prioritise large or well-resourced publishers, smaller outlets may lose visibility, narrowing the range of perspectives available to the public.

Sustainable Journalism

Additional revenue streams linked to AI use could help support local reporting, investigative work, and public-interest journalism, which are already under financial pressure.

Practical and Regulatory Challenges

The proposals also raise complex implementation questions:

- How governments can regulate AI-mediated news without restricting innovation or free expression

- How labels can be standardised, accurate, and meaningful

- How transparency measures interact with broader efforts to improve media literacy

Despite these challenges, there is growing agreement among policymakers and researchers that AI’s expanding role in news production and distribution requires clear standards and accountability.

Key Points

- A UK thinktank proposes “nutrition” labels for AI-generated and AI-assisted news

- The goal is greater transparency, trust, and user understanding

- Tech companies are urged to compensate publishers whose journalism supports AI systems

- The debate reflects wider concerns about media diversity, public trust, and democratic information